The Cost Structure That Makes AI Non-Optional

Bringing a New Molecular Entity (NME) to market costs, on average, US $2.8 billion when accounting for the cost of capital and the failures that subsidize every approval. That figure comes from the Tufts Center for Drug Development and has not meaningfully declined in a decade despite incremental process improvements. The timeline sits at 12 to 15 years from target hypothesis to regulatory approval, and roughly 90% of drug candidates that enter Phase I never reach patients. Those three numbers, taken together, define the structural problem that AI-driven drug discovery is trying to solve.

The pressure is not theoretical. Patent cliffs are accelerating. Between 2024 and 2030, branded drugs generating more than $180 billion in annual U.S. revenues face loss of exclusivity, according to IQVIA data. Companies that cannot replenish their pipelines fast enough will face revenue collapses that no amount of price management can offset. Generic and biosimilar entrants are filing Paragraph IV certifications and Abbreviated Biologics License Applications faster and earlier than ever. The strategic answer to a shorter commercial window is a faster, cheaper, higher-probability discovery engine, and AI is the most credible candidate to deliver one.

What makes this moment different from prior computational drug design waves, whether QSAR-driven efforts in the 1990s or structure-based design in the 2000s, is the convergence of three independent developments: the availability of massive labeled biological datasets, the hardware (GPU clusters and cloud TPUs) needed to train large models on those datasets, and algorithmic advances, particularly transformer architectures and diffusion models, that dramatically improve generative and predictive accuracy. Each prior wave lacked at least one of the three. All three exist simultaneously now, which is why industry adoption is no longer experimental.

$2.8B

Average NME development cost (capital-adjusted)

90%

Phase I candidates that never reach patients

12–15 yrs

Typical target-to-approval timeline

$180B+

Branded revenue facing LOE by 2030 (IQVIA)

Section Takeaways

Why the Economics Force the Issue

- At $2.8 billion per NME and a 90% Phase I failure rate, the industry cannot sustain current R&D economics without a step-change in efficiency, not incremental improvements.

- The simultaneous maturation of large datasets, GPU compute, and transformer architectures is what separates today’s AI wave from prior computational chemistry cycles that stalled.

- Patent cliff pressure between 2024 and 2030 creates a board-level urgency to compress discovery timelines, making AI adoption a capital allocation question as much as a scientific one.

- Companies that treat AI as a pilot project rather than a core pipeline function will fall behind peers who are already generating IND-ready candidates through AI-native workflows.

Target Identification: Deep Learning, GANs, and LLMs in Practice

Target identification is the step where a research team selects a protein, gene, or biological pathway to modulate therapeutically. Get it wrong and the entire downstream investment is wasted. Historically, the process relied on genetic association studies, phenotypic screening, and educated inference from the published literature, which means it was slow, expensive, and heavily dependent on the breadth of individual scientists’ reading habits. AI changes each of those constraints simultaneously.

Deep Learning Models: What They Actually Do

Deep learning models for target identification process layered datasets, protein structures, gene expression profiles, molecular interaction maps, and disease progression metrics, to extract features that human pattern recognition cannot. They do not merely surface correlations that already exist in the literature. They identify non-obvious relationships between genomic variants and disease phenotypes, and they predict protein-protein interactions that are experimentally costly to characterize. In neurodegenerative disease, where target space is poorly mapped and animal models translate badly to humans, this capability is particularly valuable. Amyotrophic Lateral Sclerosis (ALS) research has benefited from AI target identification specifically because traditional methods produced so few tractable targets in 30 years of serious effort.

Generative Adversarial Networks (GANs) extend beyond classification and ranking to actual generation. A GAN trained on known protein structures can propose entirely novel protein conformations that could serve as targets, expanding what the field considers ‘druggable.’ The same architecture enables transfer learning: knowledge encoded from well-characterized oncology targets can be applied to rare disease biology where labeled data is sparse. That transfer is not perfect, but it meaningfully accelerates hypothesis generation in underexplored areas.

Large Language Models (LLMs) trained on biomedical literature, BioGPT, ChatPandaGPT, and proprietary variants built by companies like Insilico Medicine, handle a different problem. The published biomedical literature now runs to tens of millions of papers, and no scientist reads more than a fraction of what is relevant to their therapeutic area. LLMs identify connections between biological entities across that corpus, generating hypotheses about target biology that no individual researcher would arrive at independently. They also surface potential biomarkers from unstructured text, which feeds directly into patient stratification strategies for later clinical stages.

Pfizer, Novartis, Roche, and AstraZeneca have all embedded AI technologies into their target identification workflows, typically through a combination of internal build and external partnership. That adoption is no longer experimental. It reflects production-level use of AI outputs to select which programs get funded.

IP Valuation Analysis

Recursion Pharmaceuticals: The Recursion OS and Its Patent Architecture

NVIDIA’s $50 million investment in Recursion Pharmaceuticals was made specifically to scale the Recursion Operating System (OS), the company’s proprietary platform for AI-driven target identification and drug repurposing. That deal did not merely provide capital. It validated Recursion’s platform as infrastructure-grade, the kind of asset that can underpin multiple programs across multiple disease areas.

From an IP valuation standpoint, the Recursion OS has two layers of defensibility. The first is the dataset: Recursion has generated one of the largest proprietary biological imaging datasets in the industry, built through years of high-content screening. That data is not publicly available, and reproducing it would take competitors years and hundreds of millions of dollars. The second is the algorithmic layer, which includes patents covering the application of specific machine learning architectures to biological image analysis for target identification purposes.

Platform IP of this type is valued differently from compound-specific patents. A compound patent covers one or a cluster of molecules. Platform IP can generate royalty streams and licensing fees across an effectively unlimited number of programs. Recursion’s partnership agreements with Roche and Genentech, both structured with milestone payments and royalties, reflect that platform valuation rather than a single-asset transaction. For IP teams benchmarking comparable deals, the Recursion-NVIDIA structure offers a useful reference point for how platform IP commands strategic premium over molecule-specific assets.

The FDA’s 2024 Signal: 12 AI Oncology Drugs Fast-Tracked

The FDA’s decision in 2024 to fast-track 12 oncology drugs with AI-assisted target identification on their development history is the clearest regulatory signal yet that the agency accepts AI-derived target hypotheses as scientifically credible starting points. This matters for IP strategy because fast-track designation affects exclusivity timelines. A drug that moves faster through development reaches its patent-protected commercial window sooner, compressing the gap between first filing and first revenue. For molecules where the composition-of-matter patent was filed early in the AI-assisted discovery process, faster development preserves more patent term.

Section Takeaways

Target Identification: The Genuine Gains and the Real Limits

- Deep learning and GAN-based target identification genuinely expands the druggable proteome by generating novel structural hypotheses rather than just ranking known candidates.

- LLMs extract biological hypotheses from literature at a scale no human team can match, but the output quality depends entirely on corpus curation and model fine-tuning; generic LLMs without biomedical fine-tuning are poor substitutes for specialized tools.

- Recursion’s platform IP architecture, combining proprietary datasets with algorithmic patents, is the emerging template for how AI-native biotechs command valuation premiums over traditional discovery companies.

- The FDA’s 2024 fast-track decisions for AI-identified oncology targets indicate regulatory receptivity, but the agency has not changed its evidentiary standards for clinical data quality.

- Transfer learning from oncology to rare disease targets is promising but requires caution: the biological assumptions embedded in the source domain may not transfer cleanly to the target domain.

Lead Discovery and Optimization: The Generative Chemistry Roadmap

Lead discovery and optimization, the hit-to-lead and lead optimization phases, are where AI has produced the most tangible and auditable results. This is also the area where vendor claims are most frequently overstated, so the distinction between what models genuinely predict well and what they cannot yet handle reliably matters for anyone allocating R&D budget.

De Novo Design: The Generative Chemistry Technology Roadmap

Technology Roadmap

Generative Molecular Design: Five Stages of Maturity

Stage 1

2016–2019

Variational Autoencoders (VAEs) for molecule generation: VAEs learned compact latent representations of chemical space and sampled from those representations to propose novel molecules. Early results were promising but generated many chemically unrealistic structures. Validity filters and synthetic accessibility scores were required as post-processing steps.

Stage 2

2019–2021

GAN-based generation with reinforcement learning reward signals: GANs trained with reinforcement learning feedback from docking scores and ADMET predictors improved the rate of generating molecules with desired properties. The primary limitation was mode collapse, where the generator converged on a narrow chemical series rather than exploring diverse chemical space.

Stage 3

2021–2023

Graph Neural Networks (GNNs) for molecular property prediction: GNNs represent molecules as graphs with atoms as nodes and bonds as edges, learning spatial and electronic features unavailable to string-based representations like SMILES. GNNs substantially improved binding affinity prediction accuracy and enabled multi-objective optimization across potency, selectivity, and ADMET simultaneously.

Stage 4

2023–2025

Diffusion models and 3D equivariant networks: Diffusion models, applied to 3D molecular structures rather than 2D graphs, generate molecules in three-dimensional space conditioned on a binding pocket. This approach, used in tools like DiffSBDD and Pocket2Mol, produces candidates with better geometric fit to the target. AlphaFold3’s release extended this capability by predicting protein-ligand co-complexes rather than just the protein structure alone.

Stage 5

2025–Present

Multimodal foundation models and active learning loops: The current frontier integrates protein language models, molecular generation models, and wet-lab experimental feedback into closed-loop active learning systems. The model proposes candidates, the lab synthesizes and assays a subset, experimental results update the model, and the cycle repeats. Insilico Medicine’s INS018_055 program is the most publicly documented example of a drug designed through this type of iterative AI-wet-lab loop.

The practical implication of this roadmap is that companies using Stage 1 or Stage 2 tools and claiming AI-native drug design are describing a fundamentally different capability from companies operating Stage 4 or Stage 5 systems. The gap in output quality is substantial. IP and business development teams evaluating partnership or acquisition candidates need to ask specifically which generation of architecture a company runs, not whether it ‘uses AI.’

QSAR, QSPR, and Virtual Screening: What They Predict Well and What They Don’t

Quantitative Structure-Activity Relationship (QSAR) and Quantitative Structure-Property Relationship (QSPR) models remain workhorse tools in lead optimization. They correlate molecular descriptors with biological activity or toxicity endpoints. When trained on large, well-curated datasets with consistent assay conditions, they predict potency modifications within a chemical series with useful accuracy. Where they fail is extrapolation: a QSAR model trained on one chemotype will typically not generalize to a structurally distant chemical series. This is the activity cliff problem, where small structural changes produce large, unpredictable activity changes. Models trained on 2D descriptors are particularly prone to this failure mode at activity cliffs.

Virtual screening powered by modern deep learning substantially reduces the number of synthesis-evaluation cycles needed to identify a development candidate. A well-designed virtual screening campaign can prioritize a few hundred compounds out of millions for experimental confirmation, compressing what was previously a six-to-twelve-month high-throughput screening campaign into weeks. The key qualifier is ‘well-designed’: the model must be trained on data relevant to the target class, the screening library must cover the relevant chemical space, and the docking or scoring function must be validated against experimental binding data for the specific target.

IP Valuation Analysis

Insilico Medicine: INS018_055 and the First Full-Cycle AI Patent Portfolio

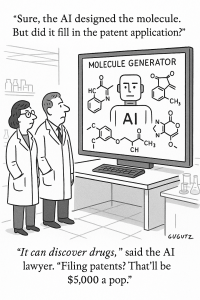

Insilico Medicine’s INS018_055, a TGF-beta receptor 1 (ALK5) inhibitor for idiopathic pulmonary fibrosis, entered Phase 2 in June 2023 as the first drug designed end-to-end by AI without prior human hypothesis. That distinction has direct IP consequences. Composition-of-matter patents on AI-generated molecules raise inventorship questions that patent offices in the U.S., Europe, and China are actively adjudicating. The current consensus in the USPTO is that a human inventor must be named, but the underlying question of how much human creative contribution is required when an AI system generates the molecule’s structure is unresolved.

For Insilico, this creates both a risk and an opportunity. The risk is that future challenges to AI-generated patent claims could affect the enforceability of their composition-of-matter protection. The opportunity is that Insilico’s platform patents, covering the method of using specific generative AI architectures to identify and optimize drug candidates, sit on more defensible ground than the compound patents themselves, because method claims are unambiguously attributable to human inventors who designed the system. Their $1.2 billion deal with Sanofi for up to six new targets demonstrates that Sanofi’s own IP team assessed the risk as manageable and valued the platform’s target-generation capability highly enough to pay a substantial upstream premium.

For pharma IP teams structuring similar deals: the cleanest approach is to ensure the licensing agreement covers both the compound IP and the method IP, with milestone structures that reduce exposure if compound patents are successfully challenged.

NLP and Semantic Knowledge Graphs in Lead Prioritization

An underappreciated application of AI in lead optimization is the integration of Natural Language Processing (NLP) with semantic knowledge graphs to assign binding affinity and molecular stability scores to candidate compounds. This approach extracts structure-activity data from published literature and patents, synthesizes it into a knowledge graph that represents relationships between molecular features and biological outcomes, and uses that graph to prioritize candidates before any synthesis occurs. The practical benefit is that it prevents teams from synthesizing compounds that the published literature would have predicted to fail, had anyone read all of it.

Section Takeaways

Lead Discovery: Auditing the Claims Against the Evidence

- Generative molecular design has advanced through five distinct architectural generations since 2016. Companies still using VAE-only or GAN-only systems are two generations behind the current state of the art.

- QSAR and QSPR models predict within-series modifications reliably but fail at chemical space extrapolation. Activity cliffs remain a fundamental limitation, not a solvable near-term problem.

- The AI inventorship question for composition-of-matter patents is unresolved in all major jurisdictions. Platform and method patents are currently more legally defensible than compound patents for AI-generated molecules.

- Insilico’s $1.2 billion Sanofi deal and Isomorphic’s near-$3 billion Eli Lilly and Novartis deals set the current benchmark for platform IP transaction valuations in AI drug discovery.

- Virtual screening requires target-specific model validation. Generic virtual screening claims without demonstrated accuracy on the specific target class should be treated skeptically.

Preclinical Development: Predictive Toxicology and the 3Rs

Preclinical development is where many AI efficiency claims meet reality. The core challenge is that in vitro and in vivo biological systems are complex, variable, and often poor predictors of human pharmacology. AI does not solve the translational gap between animal models and human disease; what it does is improve efficiency within those existing models and reduce the volume of physical experimentation needed to generate sufficient confidence in a candidate before IND filing.

In Silico Toxicology: Genuine Progress, Genuine Limits

Machine learning models trained on historical ADMET data can predict acute toxicity, hepatotoxicity, cardiotoxicity (specifically hERG channel inhibition), and mutagenicity (Ames test outcomes) with accuracy sufficient to filter out obvious failures before synthesis. The DE-INTERACT tool, for example, demonstrated a validation accuracy of 0.9161 for predicting drug-excipient interactions, confirmed subsequently by conventional analytical methods. That level of accuracy means the tool eliminates a substantial fraction of experimental work, not that it eliminates experimentation entirely. Predictions that fall below a confidence threshold still require wet-lab confirmation.

The specific limitations are worth stating directly. Chronic toxicity, particularly drug-induced liver injury (DILI) that manifests over weeks or months of exposure, is poorly predicted by current models because the relevant training data is sparse and the biological mechanisms are heterogeneous. Idiosyncratic toxicity, which arises from individual genetic variation, is almost entirely unpredictable by population-level models. Developmental and reproductive toxicity (DART) studies still require extensive animal work because the mechanistic biology is too poorly understood to model computationally.

AI in Histopathology: Orion and Aiforia as a Case Study

Digital pathology is one of the cleaner AI success stories in preclinical work because the task, classifying cellular structures in microscopy images, maps well onto what deep learning does reliably. Orion’s application of Aiforia’s platform to astrocyte identification in brain tissue produced measurable gains: faster analysis, reduced inter-observer variability, better detection of subtle phenotypic changes, and reproducible quantitative outputs that support regulatory submission. These are not incremental improvements. Inter-observer variability in histopathology has been a persistent source of noise in preclinical data, and AI-driven standardization genuinely improves data quality in ways that downstream statistical models will amplify.

The investment implication for CROs and pharma companies is that digital pathology AI reduces the number of expert pathologists needed per study, compresses analysis timelines, and produces outputs directly compatible with automated data management systems. Companies that have not integrated digital pathology AI into their standard preclinical workflows are paying a per-study cost premium relative to peers who have.

AI and the 3Rs: Replacement, Reduction, Refinement

The 3Rs framework, developed by Russell and Burch in 1959, has shaped animal research regulation in the UK, EU, and increasingly in the U.S. through ICCVAM guidance. AI contributes to all three principles, though the contributions are unequal. Replacement is where the potential is largest but current reality is most limited. AI-driven in silico toxicology and organoid screening can replace some animal studies, particularly for acute toxicity and ADMET profiling, but cannot yet substitute for the systemic biology an intact animal provides. Reduction is where the near-term gains are clearest: better experimental design, higher-confidence candidate selection, and improved statistical power calculations all reduce the number of animals per study without reducing scientific validity. Refinement benefits from AI-enabled behavioral monitoring and more precise dosing algorithms, reducing distress within studies that still require animal subjects.

For EU-based companies operating under Directive 2010/63/EU, demonstrating 3Rs compliance is a regulatory requirement, not a choice. AI tools that improve any of the three metrics can directly support regulatory submissions and ethics committee reviews.

Section Takeaways

Preclinical AI: Efficiency Gains Without Full Translation

- AI-driven in silico toxicology filters out obvious failures in acute toxicity, hepatotoxicity, hERG inhibition, and mutagenicity, but chronic, idiosyncratic, and DART toxicities remain outside reliable predictive range.

- Digital pathology AI (Aiforia, PathAI, Proscia) eliminates inter-observer variability in histopathology, producing more reproducible preclinical data and cleaner regulatory submissions.

- The 3Rs framework creates a regulatory incentive to document AI-driven reductions in animal use, which both supports ethics committee approval and reduces cost per study.

- AI does not close the translational gap between animal models and human disease. That is a biological problem, not a data science problem, and it requires better disease models, not better algorithms.

Clinical Trials: Adaptive Design, Digital Twins, and the Patient Recruitment Bottleneck

Clinical trials consume the majority of drug development cost and time, and they fail at a rate that remains stubbornly high despite decades of process improvement. AI does not change the fundamental biology that causes trials to fail, but it attacks several of the operational inefficiencies that inflate cost and extend timelines independently of whether the drug actually works.

Patient Recruitment: The Problem AI Solves Best Here

Recruitment failure or delay is responsible for approximately 80% of clinical trial timeline overruns, according to the Tufts Center for Drug Development. The traditional process, which involves site identification, investigator outreach, chart review, and patient contact, takes months and systematically excludes patients who are eligible but not connected to academic medical centers. AI addresses this through two mechanisms that operate at different points in the process.

The first is Electronic Health Record (EHR) mining. Tools developed by IBM Watson Health, Deep 6 AI, and Veeva Vault scan medical records against trial eligibility criteria and return a ranked list of potentially eligible patients in minutes rather than months. Deep 6 AI claims to reduce patient identification time from months to minutes using this approach, a claim that has been validated in pilot programs at several U.S. academic medical centers. The limiting factor is EHR data quality: records with inconsistent coding, missing laboratory values, or fragmented care histories produce noisy outputs that require human review to clear.

The second mechanism is genomic and biomarker-based stratification. For targeted therapies, finding patients with the relevant genetic alteration or biomarker profile is the prerequisite for any meaningful efficacy signal. AI stratification tools, integrated with companion diagnostic data and genomic databases, identify eligible subpopulations more precisely than traditional inclusion criteria alone. This reduces within-arm variability, which improves statistical power and potentially reduces the sample size needed to detect a treatment effect, which is a direct cost reduction.

Adaptive Trial Design: From Protocol to Practice

Adaptive trial designs, which allow pre-specified modifications to trial parameters based on interim data, have existed as a statistical methodology for decades. What AI adds is the computational capacity to simulate thousands of potential trial trajectories in advance, identifying designs with the highest probability of success given the available prior data. This is not a trivial contribution. Platform trials like RECOVERY (for COVID-19) and I-SPY (for breast cancer) demonstrated that adaptive designs can run multiple arms efficiently and drop non-performing arms without inflating Type I error, but the design phase for such trials requires extensive simulation capacity that AI makes tractable for smaller sponsor organizations.

Real-time patient monitoring through AI-enabled wearables represents a qualitative change in clinical data richness. Biofourmis uses AI-powered wearables to track vital signs continuously during trials, with anomaly detection algorithms flagging early signs of adverse events before they appear in standard scheduled assessments. That earlier detection improves patient safety and, for sponsors, reduces the risk of late-emerging safety signals that can derail an NDA submission.

Digital Twins and Synthetic Control Arms: The Regulatory Frontier

Unlearn, a San Francisco-based company, builds ‘digital twins’ of trial participants, predictive models of how an individual patient would have progressed without treatment, based on their baseline characteristics. These digital twins can then populate a synthetic control arm, reducing or eliminating the need to randomize patients to placebo. The immediate benefit is ethical: fewer patients receive inactive treatment. The downstream benefit is operational: smaller trials with the same statistical power, which reduces cost and timeline.

The FDA has not issued definitive guidance on synthetic control arms as a substitute for conventional randomization, and the agency’s current position, reflected in draft guidance from January 2025, requires robust validation of the underlying predictive model. For rare diseases where randomized control is ethically or logistically problematic, the regulatory pathway for synthetic controls is clearest. For common diseases with large patient populations, the FDA’s expectation remains that randomization is the primary protection against confounding, and synthetic controls will occupy a supplementary rather than primary role for the foreseeable future.

IP Valuation Analysis

Isomorphic Labs: AlphaFold3 Licensing and the $3 Billion Partnership Model

Isomorphic Labs, a sister company to Google DeepMind, developed AlphaFold3 as a protein structure prediction tool that extends beyond protein folding to predict protein-ligand and protein-nucleic acid complexes. Collaborations with Eli Lilly and Novartis carry potential earnings approaching $3 billion, structured as a combination of upfront payments, research milestones, and downstream royalties on approved drugs.

The IP architecture underlying this deal is distinctive. AlphaFold3’s core algorithms were developed at Google DeepMind with substantial prior art already in the public domain through AlphaFold and AlphaFold2. Isomorphic’s proprietary IP sits primarily in the application layer: the specific configurations, fine-tuning datasets, and workflows developed for drug discovery use cases. This application-layer IP is harder to patent defensively than a novel algorithm but generates value through exclusivity of access under commercial agreements rather than through patent enforcement.

The lesson for pharma deal teams is that AlphaFold3’s commercial structure reflects a reality becoming common in AI drug discovery: the foundational model is often partially or fully open-source, and the defensible IP is in proprietary training data, fine-tuning methodology, and application-specific workflow integrations. Licensing agreements should be structured around those assets, not around the foundational architecture itself.

Section Takeaways

Clinical Trials: Operational Gains, Biological Limits Unchanged

- EHR-based AI recruitment tools cut patient identification from months to days in validated pilot programs. EHR data quality remains the primary bottleneck, not algorithmic capability.

- AI simulation enables adaptive trial designs to be planned by organizations without large internal biostatistics teams, democratizing a methodology that was previously reserved for well-resourced sponsors.

- Synthetic control arms and digital twins have a clear regulatory pathway for rare diseases where traditional randomization is ethically or logistically problematic. For common disease indications, the FDA’s current position limits synthetic controls to a supplementary role.

- Isomorphic Labs’ near-$3 billion collaboration structure illustrates that application-layer IP (fine-tuning, datasets, workflows) commands high commercial value even when the underlying foundational model has significant public-domain prior art.

Formulation and Manufacturing: AI Beyond the Discovery Pipeline

Formulation development and manufacturing are not glamorous research areas, but they directly affect time-to-market, COGS, and the commercial viability of a drug. AI is making measurable contributions to both, and the IP implications are underappreciated relative to the attention paid to discovery-phase applications.

Excipient Optimization and Drug-Excipient Interaction Prediction

Excipients are the inactive ingredients in a pharmaceutical formulation, the binders, fillers, disintegrants, lubricants, and coating materials that determine a tablet’s stability, dissolution profile, and bioavailability. Selecting the right excipient combination and concentration has historically required extensive experimental runs, particularly for solid oral dosage forms where interactions between drug substance and excipients can affect both shelf life and in vivo performance. Machine learning models trained on historical formulation data predict optimal excipient combinations and concentrations with sufficient accuracy to substantially reduce the number of experimental iterations needed.

The DE-INTERACT tool achieved a validation accuracy of 0.9161 for drug-excipient interaction prediction, with predictions confirmed by differential scanning calorimetry and FTIR spectroscopy. That level of predictive accuracy does not eliminate bench work, but it prioritizes the experiments worth running and eliminates the obvious failures before any material is consumed. For complex formulations like amorphous solid dispersions or lipid-based drug delivery systems, where excipient compatibility is a critical success factor, the reduction in experimental burden translates directly to compressed development timelines.

AI-Driven 3D Printing for Personalized Dosage Forms

The integration of AI with pharmaceutical 3D printing represents a medium-term commercial opportunity that few companies have fully quantified. AI algorithms can customize the design and formulation of 3D-printed dosage forms to individual patient parameters, age, weight, renal function, comedications, and medical history, producing dosage forms with tailored release profiles, strengths, and geometries that mass production cannot deliver. The technology exists today in research and hospital pharmacy settings. The commercial obstacle is regulatory: FDA’s Current Good Manufacturing Practice (CGMP) regulations were written for batch production, not individualized on-demand manufacturing, and the agency’s 2023 Emerging Technology Program has initiated engagement with several 3D printing sponsors but has not issued definitive guidance.

From an IP perspective, 3D-printed personalized dosage forms can carry formulation patents, process patents covering the AI-parameterization methodology, and potentially device patents on the printing hardware configuration. This multi-layered IP structure is more defensible than any single layer alone.

Section Takeaways

Formulation AI: Underestimated Commercial Value

- AI-driven excipient optimization with tools like DE-INTERACT achieves ~92% prediction accuracy for drug-excipient compatibility, materially reducing bench work in formulation development.

- 3D printing for personalized dosage forms is technically ready but commercially constrained by CGMP regulatory uncertainty. Companies engaging FDA’s Emerging Technology Program now will have a first-mover advantage when guidance is issued.

- Formulation and process patents on AI-optimized manufacturing workflows create IP protection that extends beyond the primary patent cliff, offering a legitimate evergreening pathway that does not require clinical re-work.

Pipeline Scorecard: Who Has What in Trials, and What It’s Worth

The number of AI-assisted drug candidates in clinical stages grew from 3 in 2016 to 17 in 2020 and 67 in 2023. Phase I success rates for this cohort, at 80% to 90%, substantially exceed the approximately 40% rate for traditionally discovered candidates. These figures come with a caveat: the cohort is young, and Phase I measures tolerability, not efficacy. The real test is Phase II and Phase III performance, where the 67 current candidates will either validate the approach or reveal its limits.

| Company | Lead Asset | Indication | Stage | Key IP Assets | Partnership / Deal Value |

|---|---|---|---|---|---|

| Insilico Medicine | INS018_055 | Idiopathic Pulmonary Fibrosis (ALK5 inhibitor) | Phase 2 | Composition-of-matter + generative AI platform methods | $1.2B Sanofi deal (up to 6 targets) |

| Atomwise | ATM-001 (TYK2 inhibitor) | Autoimmune / autoinflammatory | Preclinical/IND | AtomNet platform; structure-based virtual screening IP; 235/318 targets hit rate | Multiple pharma partnerships; Merck collaboration |

| Recursion Pharmaceuticals | REC-994 | Cerebral Cavernous Malformation (CCM) | Phase 2 complete (met primary endpoint) | Recursion OS; proprietary phenomics dataset; imaging AI patents | $50M NVIDIA; Roche/Genentech collaboration |

| Anima Biotech | 20 candidates (lead: lung fibrosis) | Immunology, Oncology, Neuroscience | Preclinical | mRNA translation modulation platform; ribosome-targeting IP | Eli Lilly collaboration; undisclosed terms |

| BPGbio | BPM31510 | Glioblastoma (GBM), Pancreatic Cancer | Phase 2 | NAD+/metabolic pathway modulation; AI-derived mechanism patents | BioTech AI Company of the Year 2024 (internal financing) |

| Isomorphic Labs | AlphaFold3-enabled pipeline | Multiple (oncology focus) | Research / Preclinical | AlphaFold3 application-layer IP; protein-ligand complex prediction | ~$3B potential (Eli Lilly + Novartis) |

IP Valuation Analysis

Atomwise: AtomNet’s 235/318 Target Hit Rate and What It’s Worth

Atomwise’s AtomNet platform identified structurally novel hits for 235 out of 318 targets tested in a published benchmarking study. A 74% hit rate across a diverse target panel, using virtual screening alone, is a metric that contextualizes AtomNet’s utility against traditional high-throughput screening (HTS). HTS typically costs $500,000 to $2 million per target when accounting for library management, assay development, and confirmatory testing. If AtomNet can replace or substantially reduce HTS for 74% of a company’s target portfolio, the per-program savings are substantial.

The IP valuation implication is that Atomwise’s platform IP has quantifiable utility savings attached to it, which supports licensing and milestone structures based on per-target fees rather than royalties alone. For pharma R&D finance teams: if you use Atomwise for target-identification virtual screening on 20 programs per year and achieve even a 50% reduction in HTS spend, the platform pays for itself before any downstream milestone payments accrue.

‘The Phase I success rate for AI-developed drugs stands at 80% to 90%, versus approximately 40% for traditionally discovered candidates. The real validation comes at Phase II and Phase III, where the 2025 to 2028 cohort will either confirm or correct that number.’Based on PMC11909971 data analysis

Section Takeaways

Pipeline Scorecard: Reading the Data Honestly

- Sixty-seven AI-assisted candidates in clinical stages as of 2023 represents a 20-fold increase since 2016. The cohort is large enough to generate statistically meaningful Phase II data by 2026 to 2027.

- Phase I success rates of 80% to 90% are encouraging but measure tolerability, not efficacy. Phase II data from this cohort is the true validation event for AI-driven discovery claims.

- The $1.2 billion Insilico-Sanofi deal and the near-$3 billion Isomorphic-Lilly-Novartis deals set the current transaction benchmark. Platform IP commands higher valuation multiples than single-asset IP in these structures.

- Atomwise’s 74% hit rate across 318 targets provides a quantifiable cost-per-target utility metric that supports fee-based licensing negotiations rather than speculative royalty structures.

Market Dynamics and the Investment Landscape

The global AI in drug discovery market was valued at USD 3.0 billion in 2022. Depending on the analyst, projections for 2030 range from USD 7.94 billion (Fortune Business Insights, 12.2% CAGR) to USD 8.18 billion (Mordor Intelligence, 25.94% CAGR). The divergence in CAGR estimates reflects different assumptions about adoption rates in emerging markets and the speed of regulatory acceptance for AI-derived data packages. Both projections agree on the direction: the market is growing rapidly, and the primary drivers are R&D cost pressure, generative AI adoption, and the accumulation of proprietary biological datasets that make AI models more accurate over time.

Strategic alliances are the dominant commercial structure in this market. Pharma companies acquire AI capability through partnerships rather than primarily through internal development because the talent required, PhD-level machine learning researchers with biological domain expertise, is both scarce and expensive to retain inside a pharma organization. The AI company gets capital, data access, and validation. The pharma company gets pipeline optionality without building AI infrastructure from scratch. Both sides benefit from risk-sharing, which explains why milestone-heavy deal structures with relatively modest upfronts dominate the space.

The competitive dynamics are shifting. The first generation of AI drug discovery deals, from roughly 2016 to 2020, were exploratory partnerships where pharma companies paid to evaluate whether AI could deliver results. The current generation is operational: pharma companies are selecting AI platforms as preferred vendors for specific target classes and committing to multi-program, multi-year relationships. Insilico’s Sanofi deal for up to six targets is a clear example of this structural shift from evaluation to operational integration.

Investment Strategy

How Portfolio Managers Should Position in AI Drug Discovery

The key distinction for institutional investors evaluating AI drug discovery companies is the difference between platform companies and asset companies. Platform companies, Recursion, Atomwise, Insilico, generate value through repeated application of their technology across multiple programs. Asset companies, BPGbio with BPM31510, generate value through the clinical success of specific drugs. The investment thesis, risk profile, and valuation methodology differ substantially.

- Platform companies should be valued on a combination of pipeline optionality and data moat: how many active programs, how defensible is the proprietary dataset, and what is the cost to replicate the platform for a competitor?

- Asset companies should be valued on conventional risk-adjusted net present value (rNPV) of their clinical candidates, with AI-driven discovery history informing but not replacing standard Phase I/II/III probability-of-success assumptions.

- The data quality moat is the most durable competitive advantage in this space. Companies like Recursion that have spent years generating proprietary phenomics data are structurally advantaged over companies that depend on public datasets, because public datasets are available to every competitor equally.

- Watch for the 2025 to 2027 Phase II readouts from first-generation AI-discovered candidates. Positive Phase II data will trigger rerating events across the sector. Negative data will accelerate consolidation.

- AI inventorship IP risk is real but not yet priced into most analyst models. Composition-of-matter patents for AI-generated molecules face potential validity challenges as patent office guidance evolves. This risk is asymmetric: it does not prevent commercial launches but could affect exclusivity periods if a Paragraph IV challenge succeeds on an inventorship theory.

The Data Problem: Why Quality Beats Quantity Every Time

Every AI model in drug discovery is only as good as the data it was trained on. This is not a novel observation, but the specific ways that data quality problems manifest in pharmaceutical AI are worth examining in detail, because they define where the realistic limits of current technology sit.

Five Failure Modes in Pharmaceutical AI Datasets

The first is distributional bias. Models trained on drug activity data that comes disproportionately from compounds similar to known drugs will systematically underestimate activity in chemically novel regions of chemical space. This is the opposite of what a generative discovery program needs: the most valuable outputs are molecules unlike anything in the training set, but those are precisely the molecules the model is least equipped to evaluate accurately.

The second is assay variability. Drug activity measurements taken across different laboratories, even using nominally identical protocols, exhibit significant variability. A study examining IC50 measurements for the same compound across multiple facilities routinely finds two-fold to ten-fold differences that reflect experimental noise rather than true biological variation. When a model is trained on aggregated assay data from multiple sources, that noise becomes an irremovable feature of the training distribution, setting a ceiling on predictive accuracy that no amount of algorithmic sophistication can overcome.

The third is class imbalance. In most drug activity datasets, active compounds are outnumbered by inactive compounds by ratios of 50:1 to 1000:1. A naive classifier that calls everything inactive will achieve high overall accuracy while providing no useful signal. Addressing this requires deliberate data augmentation, synthetic minority oversampling, or loss function re-weighting, all of which introduce their own biases if not carefully validated.

The fourth is small dataset size for novel targets. A model predicting activity at a well-characterized kinase with thousands of known inhibitors in ChEMBL is in a fundamentally different position from a model predicting activity at a newly identified GPCR with 50 data points. Transfer learning helps, but the irreducible minimum dataset size for reliable predictions at a novel target class is a practical constraint that no amount of model sophistication eliminates.

The fifth is negative data scarcity. Drug discovery databases are dominated by positive results: compounds that showed activity get published and deposited. Compounds that showed no activity rarely do. A model trained on this biased corpus learns an incomplete picture of chemical space, overestimating the density of active compounds and potentially wasting synthesis resources on regions that have been quietly tested and found empty.

Strategic Responses: What Responsible AI-Native Companies Are Doing

Companies that have confronted these problems seriously are addressing them through four approaches. The first is internal data generation at scale, creating proprietary datasets with controlled, consistent assay conditions across their own platforms. Recursion’s phenomics dataset, generated entirely in-house, is the canonical example. The second is federated learning, which allows models to be trained on data from multiple institutions without those institutions sharing raw data, addressing privacy and proprietary concerns while increasing effective dataset size. The third is rigorous uncertainty quantification, building models that report confidence intervals rather than point predictions, so that low-confidence outputs are flagged for wet-lab confirmation rather than acted upon directly. The fourth is active learning, iteratively querying experimental results to update the model in real time, which concentrates synthesis effort on the regions of chemical space where uncertainty is highest and predictions are most likely to improve.

Section Takeaways

Data Quality: The Constraint That Caps Every Other Capability

- Distributional bias, assay variability, class imbalance, small dataset sizes, and negative data scarcity are the five primary failure modes in pharmaceutical AI training data. They are structural problems, not bugs that software updates will fix.

- Proprietary, internally generated datasets are the strongest form of competitive moat in AI drug discovery, because they cannot be replicated without years of experimental work and substantial capital investment.

- Federated learning addresses data sharing barriers in multi-institutional collaborations but requires governance frameworks that define data ownership, model ownership, and IP rights across participating organizations.

- Models without uncertainty quantification are unsuitable for production drug discovery use. Any vendor that cannot report prediction confidence intervals alongside point predictions is selling a research prototype, not a validated tool.

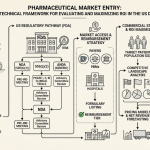

Regulatory Landscape: FDA’s January 2025 AI Guidance and What It Means in Practice

The FDA released draft guidance in January 2025 on the use of AI and machine learning across the drug development lifecycle, including pharmacovigilance, trial design, and manufacturing. This guidance does not dramatically lower the bar for what the FDA will accept as valid evidence, but it does provide explicit acknowledgment that AI-derived data can be incorporated into IND and NDA submissions, provided specific validation and documentation standards are met.

What the January 2025 Guidance Actually Requires

The FDA’s framework centers on contextual risk evaluation. Higher-risk applications, those where an AI model’s output directly influences a dose-selection or patient-safety decision, require more rigorous validation than lower-risk applications, such as using an AI tool to pre-screen literature for adverse event signals. For pharmacovigilance specifically, the agency requires that sponsors demonstrate ‘credible oversight and validation’ of AI systems used for case processing, safety signal detection, and regulatory reporting. That phrase is important: it means human review of AI outputs is required in the current framework, not optional. Fully automated pharmacovigilance remains outside the scope of what the guidance permits.

For clinical trial design, the guidance acknowledges AI simulation tools for protocol optimization but requires that the underlying models be validated against historical trial data before being used prospectively. This validation standard is achievable for common disease areas with extensive historical data but challenging for rare diseases where historical trial data is sparse, which is precisely the population where AI-driven design would provide the most value.

For manufacturing, the FDA’s Position on continuous manufacturing and AI-driven process control is more permissive, reflecting the agency’s longer history of engagement with Process Analytical Technology (PAT) and Quality by Design (QbD) frameworks. AI quality control systems that operate within a validated PAT framework can achieve regulatory acceptance under existing CGMP guidance without waiting for new AI-specific rules.

EU and ICH Regulatory Alignment

The European Medicines Agency (EMA) published its own AI strategy in 2023 and has been developing technical guidelines through the ICH M14 guideline on general principles for AI in drug development. The ICH process, which creates harmonized guidance across FDA, EMA, and PMDA (Japan), means that well-structured AI validation packages prepared for FDA submission will generally satisfy EMA and PMDA expectations with modest adaptation. This alignment reduces the global regulatory burden for companies with multi-regional development programs, though implementation timelines vary across jurisdictions.

Section Takeaways

Regulatory Reality: Credible Oversight Is Non-Negotiable

- FDA’s January 2025 draft guidance explicitly permits AI-derived data in submissions but requires contextual risk evaluation, validation documentation, and human oversight for high-risk applications. It does not lower evidentiary standards.

- Fully automated pharmacovigilance is outside the current regulatory boundary. Human review of AI outputs remains mandatory for case processing and safety signal reporting.

- AI systems operating within existing PAT and QbD manufacturing frameworks can achieve regulatory acceptance under current CGMP guidance without new AI-specific rules.

- ICH M14 harmonization means FDA-ready AI validation packages are largely transferable to EMA and PMDA submissions, reducing global regulatory duplication for multi-regional programs.

- Regulatory engagement is most effective when initiated early: companies that brief FDA under the Emerging Technology Program before filing their first AI-assisted IND face fewer unexpected questions at the IND review stage.

Algorithmic Bias, Privacy, and Accountability: The Risks That Are Underpriced

The ethical and legal risks of AI in drug discovery are real, they are specific, and they are currently underpriced in most analysts’ models. That is not because they are unlikely to materialize, but because the consequences tend to arrive late in the development cycle, when they are most expensive to address.

Algorithmic Bias: Where It Comes From and What It Costs

Algorithmic bias in drug discovery originates from the same source as dataset bias: training data that does not represent the population the drug will ultimately treat. If a target identification model was trained predominantly on genomic data from European ancestry populations, its outputs will be systematically less accurate for patients of African, Asian, or Latin American ancestry. This is not a hypothetical problem. The PharmacoGenomics Knowledge Base (PharmGKB) contains well-documented examples of pharmacogenomic associations that differ significantly across ancestry groups, and models that do not account for this variation will generate target hypotheses and clinical predictions that perform worse in underrepresented populations.

The commercial cost of failing to address this at the discovery stage is a Phase III trial that shows efficacy in the enrolled population, which is predominantly of European ancestry in most major trials, but that produces variable or inferior outcomes in post-marketing surveillance across a more diverse patient population. That pattern can trigger post-approval commitments, label restrictions, or, in severe cases, voluntary or mandatory market withdrawal. None of these are low-probability events for drugs that reach approval; they are near-certainties if diversity was not built into the discovery and trial processes from the start.

Data Privacy: Federated Learning Is Not a Complete Solution

Using patient EHR data, genomic data, and wearable-derived health data to train and validate AI models creates HIPAA, GDPR, and emerging state-level privacy obligations that are not fully resolved by federated learning. Federated learning prevents raw data from leaving an institution but does not prevent model inversion attacks, a form of adversarial attack that can reconstruct training data from model gradients. For highly sensitive genomic data, where identification of an individual from even partial genomic information is technically feasible, this attack vector is a genuine security risk rather than a theoretical one.

Data use agreements (DUAs) in AI drug discovery partnerships need to explicitly address model training rights, model ownership, and the conditions under which the trained model can be applied to new datasets. Agreements that are ambiguous on these points create IP disputes at the most commercially sensitive moments, typically when the partnership is preparing for an asset licensing deal or M&A transaction.

Accountability: The Black Box Problem and Explainable AI

Deep learning models, particularly large transformer-based models, do not provide human-readable explanations for their outputs. A model that recommends deprioritizing a clinical candidate based on predicted toxicity cannot tell the medicinal chemist which molecular feature drove that prediction. This opacity creates several distinct problems. First, the scientist cannot validate the reasoning, so the model’s errors are invisible until they manifest as wet-lab failures. Second, regulatory submissions that rely on AI model outputs require explanations of how the AI reached its conclusions, and ‘the model said so’ does not meet FDA documentation standards. Third, when an AI-assisted drug is eventually approved and a post-market safety signal emerges, the question of whether the AI system should have flagged the risk will generate litigation discovery requests that are nearly impossible to satisfy for opaque models.

Explainable AI (XAI) methods, SHAP values, LIME, attention visualization for transformers, and inherently interpretable architectures like decision trees trained to approximate neural network outputs, address this problem to varying degrees. They are not perfect solutions; a SHAP explanation of a 100-layer neural network is an approximation, not a ground-truth account of how the model works. But they produce documentation that can support regulatory submissions and legal defensibility, which makes them a business requirement rather than an optional add-on.

Investment Strategy

Risk Pricing for Algorithmic Bias and Privacy Liability

Institutional investors and deal teams should apply the following adjustments when evaluating AI drug discovery assets:

- Discount clinical stage assets where the AI discovery platform was trained on non-diverse datasets without documented bias mitigation. Post-market performance risk in underrepresented populations is a real commercial exposure that standard rNPV models rarely capture.

- Require XAI documentation as part of technical due diligence for any AI-platform acquisition. Companies that cannot produce interpretable model explanations will face compounding regulatory and litigation risk as AI oversight expectations tighten.

- Assess DUA terms in platform company partnerships. Ambiguous data rights provisions are a red flag for IP disputes that will surface at M&A or licensing inflection points.

- Federated learning is a necessary but not sufficient privacy protection. Ask target companies specifically whether they have conducted model inversion attack testing on their federated systems.

Investment Strategy for Portfolio Managers: A Decision Framework

The AI drug discovery investment universe can be segmented into four categories, each with distinct risk-return profiles, IP characteristics, and valuation methodologies. Portfolio managers who conflate these categories will misprice both the upside and the downside.

Investment Framework

Four Categories of AI Drug Discovery Investment

Category 1

AI-native platform companies with proprietary datasets (Recursion, Insilico, Atomwise): Value on platform optionality and data moat depth. The key metric is cost-to-replicate for the proprietary dataset, not the algorithmic architecture. These companies are structurally positioned to capture royalties across multiple programs. Primary risk: clinical failure of first-generation assets could impair market confidence in the platform before the pipeline matures.

Category 2

Big pharma with AI-integrated pipelines (Pfizer, Novartis, AstraZeneca, Roche): AI is a process efficiency play for these companies, not a standalone investment thesis. The relevant questions are how much AI adoption is reducing per-program cost and whether it is improving Phase II success rates relative to historical company averages. These are measurable metrics that are beginning to appear in company R&D productivity disclosures.

Category 3

AI infrastructure and tooling companies (NVIDIA for pharma, cloud providers, specialized software vendors): These companies capture value through API access, GPU compute revenue, and software licensing regardless of which drug candidate succeeds. They carry the lowest binary clinical risk in the value chain. NVIDIA’s $50 million Recursion investment is strategically logical from this perspective: if AI drug discovery scales, NVIDIA captures a share of the compute spend across every company in the sector.

Category 4

Single-asset AI-derived clinical companies (BPGbio, early Recursion programs): Value on standard rNPV methodology, with a Phase I success rate prior of 80% to 90% rather than the historical 40%. This is a meaningful adjustment to standard models but does not change the evaluation framework. Phase II and Phase III remain the value-creation events, and the biology still determines the outcome.

The democratization thesis articulated by Aaron Ring at Fred Hutch Cancer Center, that AI is collapsing the resource barriers that previously allowed only large pharma companies to run systematic discovery programs, has direct portfolio implications. If academic labs and small biotechs can now run AI-driven discovery programs that previously required Big Pharma infrastructure, the supply of early-stage assets will expand substantially. That expands the pool of potential acquisition targets for large pharma, which supports deal multiples in the early-stage biotech sector more broadly, not just in AI-native companies.

The near-term catalyst calendar is straightforward. Phase II readouts from the 2023 cohort of AI-discovered drugs will begin arriving in 2025 and accumulate through 2027. Positive Phase II data in indication-relevant endpoints will trigger a broad sector rerating. Negative data, particularly for a high-profile program like INS018_055, will accelerate consolidation and create acquisition opportunities for well-capitalized strategic buyers.

FAQ: Hard Questions, Straight Answers

How does AI actually compress drug development timelines? Which stages benefit most and which see little change?

AI delivers the largest time reductions in the earliest stages: target identification (weeks instead of months), hit identification through virtual screening (days instead of a high-throughput screening campaign), and lead optimization through generative design (fewer synthesis-test cycles). Preclinical timelines compress through faster toxicology prediction and automated histopathology analysis. Clinical trial recruitment improves through EHR mining. The stages where AI has minimal impact on timeline: human clinical pharmacology studies, Phase III patient accrual in competitive enrollment environments, and regulatory review, where the FDA’s clock runs on its own schedule. The net effect is a projected 40% reduction in overall development timeline, concentrated in the pre-IND period.

What does ‘AI-designed’ actually mean for a drug’s patent portfolio, and is it more or less legally defensible than a traditionally discovered drug?

Currently, ‘AI-designed’ creates complexity for composition-of-matter patents because all major patent jurisdictions require a human inventor. The USPTO’s position, as of 2024, is that a human must make a significant creative contribution to the claimed invention. If an AI system generates the molecular structure without meaningful human intervention beyond setting up the model, the inventorship claim is vulnerable to challenge. Platform and method patents covering the AI system itself are on more defensible ground because the human engineers who built the system are clearly the inventors. For IP strategy, the safest current approach is to document human scientific judgment at key decision points in the AI-assisted design process, creating a defensible inventorship record even when the molecular structures themselves were machine-generated.

Is the 80% to 90% Phase I success rate for AI-discovered drugs a reliable predictor of eventual approval rates?

Not yet. Phase I measures tolerability, not efficacy, and the cohort of AI-discovered drugs that has completed Phase I is small and young. The selection effect is also real: first-generation AI programs were often chosen for clinical testing precisely because they had exceptional preclinical data, which may have pulled the highest-quality candidates into the early cohort. The meaningful statistical test will be Phase II efficacy readouts from the 2023 cohort, arriving between 2025 and 2027. If Phase II success rates for AI-discovered drugs significantly exceed the historical rate of approximately 30%, the case for AI’s efficacy-improving effect will be substantially stronger.

How should pharma IP teams evaluate AI platform partnerships to protect downstream exclusivity?

Four provisions deserve particular attention. First, data rights: the agreement must clearly specify which party owns training data generated during the collaboration and whether the AI company can use collaboration-generated data to improve models used for other partners. Second, model ownership: who owns the fine-tuned model weights generated during the collaboration? Third, exclusivity scope: is the AI platform’s exclusivity limited to specific target classes or disease areas, and is that exclusivity temporal or perpetual? Fourth, sublicensing rights: if the pharma company is acquired or acquires another company during the collaboration, do the AI platform rights transfer, and under what conditions can they be sublicensed? These provisions can meaningfully affect the commercial value of any asset generated through the collaboration.

Can smaller biotechs with limited resources actually compete using AI, or does this technology just extend Big Pharma’s advantage?

AI genuinely does lower the resource threshold for early-stage drug discovery. A well-resourced academic lab or lean biotech can now run generative chemistry programs using commercially available platforms like Schrödinger, OpenEye, or cloud-accessible foundation models that would have required a full computational chemistry department a decade ago. The real resource moat is not algorithmic access; it is proprietary biological data generation and wet-lab validation capacity. Small organizations that lack the ability to generate large, high-quality proprietary datasets will depend on public data, which provides no competitive differentiation. The structural advantage of large pharma and well-funded AI biotechs is their ability to invest in data generation at scale, not their access to algorithms.

What does FDA’s January 2025 AI guidance mean for companies planning to include AI-derived data in IND or NDA submissions?

The guidance requires three things that many current AI tools do not provide out of the box: documented model validation against relevant biological data, uncertainty quantification on model outputs, and human oversight of AI-generated decisions that influence patient safety. Companies planning to reference AI-derived toxicology predictions or target identification analyses in IND submissions should prepare a model card for each AI tool used, documenting training data provenance, validation methodology, known limitations, and the human review process applied to model outputs. The FDA has stated it will accept this documentation in the existing IND module structure; no new submission format is currently required.

Key Quantitative Benchmarks: Traditional vs. AI-Accelerated Discovery

| Metric | Traditional Method | AI-Accelerated | Improvement | Source / Notes |

|---|---|---|---|---|

| Average time to market | 12 to 15 years | Potentially under 24 months (discovery phase); 40% overall reduction projected | ~40% timeline compression | Tufts CSDD; Mordor Intelligence 2025 |

| Average NME cost (capital-adjusted) | USD $1B to $2.8B | Preclinical costs cut 28%; discovery costs cut >40% | Significant per-program savings | PMC dev.to/clairlabs analysis |

| Phase I clinical success rate | ~40% | 80% to 90% | 2x to 2.25x improvement | PMC11909971 (as of December 2023, n=21) |

| Patient recruitment speed | Months per site | Minutes (Deep 6 AI, IBM Watson Health) | ~2x faster enrollment rate | WIPO Global Health blog; appwrk.com |

| Protein structure prediction | Weeks per structure | Under 48 hours (AlphaFold3) | >95% time reduction | Google DeepMind / Isomorphic Labs |

| HTS hit rate (novel targets) | 0.01% to 0.1% typical | 74% hit rate across 318 targets (Atomwise AtomNet) | Order-of-magnitude improvement | Atomwise published benchmarking study |

| AI clinical candidates (cumulative) | 3 (2016) | 67 (2023) | 22x in 7 years | PMC11909971 |

| AI drug discovery market size | USD $3.0B (2022) | USD $7.9B to $8.2B projected (2030) | 12% to 26% CAGR | Fortune Business Insights; Mordor Intelligence |

Master Summary: What Decision-Makers Need to Act On Now

Ten Conclusions for Pharma IP Teams, R&D Leads, and Portfolio Managers

- AI compresses discovery and early preclinical timelines by 40% or more in programs where the technology has been properly deployed. Those gains do not extend equally to every pipeline stage; clinical and regulatory timelines follow their own constraints.

- Phase I success rates of 80% to 90% for AI-discovered candidates are encouraging. Phase II data, arriving 2025 to 2027, is the decisive validation event for the entire asset class.

- Platform IP (datasets, methods, workflow integrations) is more legally defensible than composition-of-matter patents for AI-generated molecules, given unresolved inventorship questions across all major patent jurisdictions.

- Transaction benchmarks are set: $1.2 billion for a six-target platform deal (Insilico-Sanofi), near $3 billion for foundational model access (Isomorphic-Lilly-Novartis). IP and BD teams should use these as reference points for comparable deal structures.

- The data moat is the primary competitive differentiator. Proprietary, internally generated datasets that competitors cannot replicate without years of effort are worth more to long-term IP strategy than any individual patent.

- Algorithmic bias creates post-market commercial risk that standard rNPV models do not capture. Drugs discovered on non-diverse training data will underperform in real-world diverse patient populations.

- FDA’s January 2025 AI guidance requires model validation documentation, uncertainty quantification, and human oversight for high-risk applications. Companies without these capabilities need them before filing their first AI-assisted IND.

- Data use agreement terms govern who benefits from AI drug discovery partnerships. Ambiguous provisions on training data rights, model ownership, and exclusivity scope create IP disputes at the worst possible moments.

- AI is democratizing early-stage discovery for smaller organizations that lack HTS infrastructure. This will expand the early-stage acquisition target pool for large pharma, supporting deal multiples across the sector.

- Companies that cannot articulate which generation of generative architecture they run, what their model validation process is, and how they handle prediction uncertainty are not ready for production AI drug discovery, regardless of what their marketing materials state.

Primary Sources and References

- PMC11510778 — AI Applications in Drug Discovery and Development (2024)

- PMC7577280 — Artificial Intelligence in Drug Discovery and Development (2020)

- ZeClinics — Drug Discovery and Development: A Step-By-Step Process

- ACS Omega (DOI: 10.1021/acsomega.5c00549) — AI-Driven Drug Discovery: A Comprehensive Review (2025)

- dev.to/clairlabs — Machine Learning Techniques for Target Identification

- PatSnap Synapse — How AI Helps Optimize Lead Compounds in the Drug Discovery Pipeline

- PMC11909971 — Integrating AI in Drug Discovery and Early Development (2025)

- Aiforia — AI in Drug Discovery (Orion/Astrocyte case study)

- ModernVivo — AI Optimization and Streamlining Drug Development

- WIPO Global Health Blog — AI in Drug Development: Transforming Clinical Trials

- Labiotech.eu — 12 AI Drug Discovery Companies (2025)

- Fortune Business Insights — AI in Drug Discovery Market Report to 2030

- Mordor Intelligence — AI in Drug Discovery Market Size, Growth and Drivers to 2030

- GlobeNewswire (May 2025) — AI in Drug Discovery Market Outlook 2025 to 2030

- Recursion Pharmaceuticals IR — NVIDIA Collaboration and $50M Investment Announcement

- Nasdaq — Recursion Pharmaceuticals: The AI Drug Discovery Play

- Duane Morris — FDA AI Guidance: A New Era for Biotech and Regulatory Compliance (February 2025)

- Applied Clinical Trials Online — How AI and Regulation Are Reshaping Drug Safety

- ResearchGate (PMC389518519) — Ethical Considerations and Bias Mitigation in AI-Driven Biopharma

- Creative Biolabs AI Blog — Addressing Data Challenges in AI-Driven Drug Discovery

- Timmerman Report (June 2025) — AI Drug Discovery: A Revolution for the Underdogs

- NC3Rs — The 3Rs Framework

- Frontiers in Pharmacology (2025) — Practical Implementation of 4R Principles in Pharmacology

- PMC10302890 — The Role of AI in Drug Discovery: Challenges, Opportunities, and Strategies

- PMC11069149 — Tribulations and Future Opportunities for AI in Precision Medicine

- PMC11800368 — AI in Action: Redefining Drug Discovery and Development

- Citeline — The Future of AI and Data Science in Pharma: 2025 and Beyond

- appwrk.com — Top AI Use Cases in Life Sciences